[ad_1]

OpenAI is a tool that attempts to distinguish between human-written text and AI-generated text, such as that generated by the company’s proprietary ChatGPT and GPT-3 models, after telegraphing media appearances. was launched. While this classifier is not very accurate (it has a success rate of about 26%, according to OpenAI), it can help prevent abuse of AI text generators when used in combination with other methods, says OpenAI. claims.

“Classifiers aim to mitigate the false claim that AI-generated text was written by humans. However, they still have many limitations. It should be used as a complement to your primary decision-making tool,” an OpenAI spokesperson told TechCrunch in an email. “We are making this initial classifier available to get feedback on whether such a tool would be useful, and we hope to share improved methods in the future.”

As enthusiasm for generative AI, especially text-generative AI, grows, critics are calling on the creators of these tools to take steps to mitigate their potentially harmful effects. Some of the largest U.S. school districts have banned his ChatGPT on their networks and devices, concerned about the impact on student learning and the accuracy of the content the tool generates. Sites, including Stack Overflow, have also banned users from sharing ChatGPT-generated content, and AI has made it all too easy for users to flood her discussion threads with questionable answers. says there is.

OpenAI’s classifier (appropriately called the OpenAI AI Text Classifier) is architecturally interesting. This is an AI language model trained on a large number of examples of texts published from the web, similar to ChatGPT. But unlike ChatGPT, it is fine-tuned to predict the likelihood that text from any text-generating AI model, not just ChatGPT, was generated by AI.

More specifically, OpenAI trained the OpenAI AI Text Classifier on texts from 34 text generation systems from 5 different organizations, including OpenAI itself. This text is derived from Wikipedia, websites extracted from links shared on Reddit, and similar (but technically similar) extracted from a series of “human demonstrations” collected for previous OpenAI text generation systems. not) were paired with human-generated text. (However, OpenAI noted in its supporting documentation that it may have erroneously misclassified text written by AI as being written by humans, “given the proliferation of AI-generated content on the Internet.” I admit that there is

Importantly, OpenAI Text Classifier does not work for all texts. Must be at least 1,000 characters, or approximately 150-250 words. Plagiarism is not detected. This is a particularly unfortunate limitation given that text generation AI has been shown to regurgitate the text it is trained on. OpenAI also states that because it uses an English-ordered data set, problems are likely to occur with text written by children or written in languages other than English.

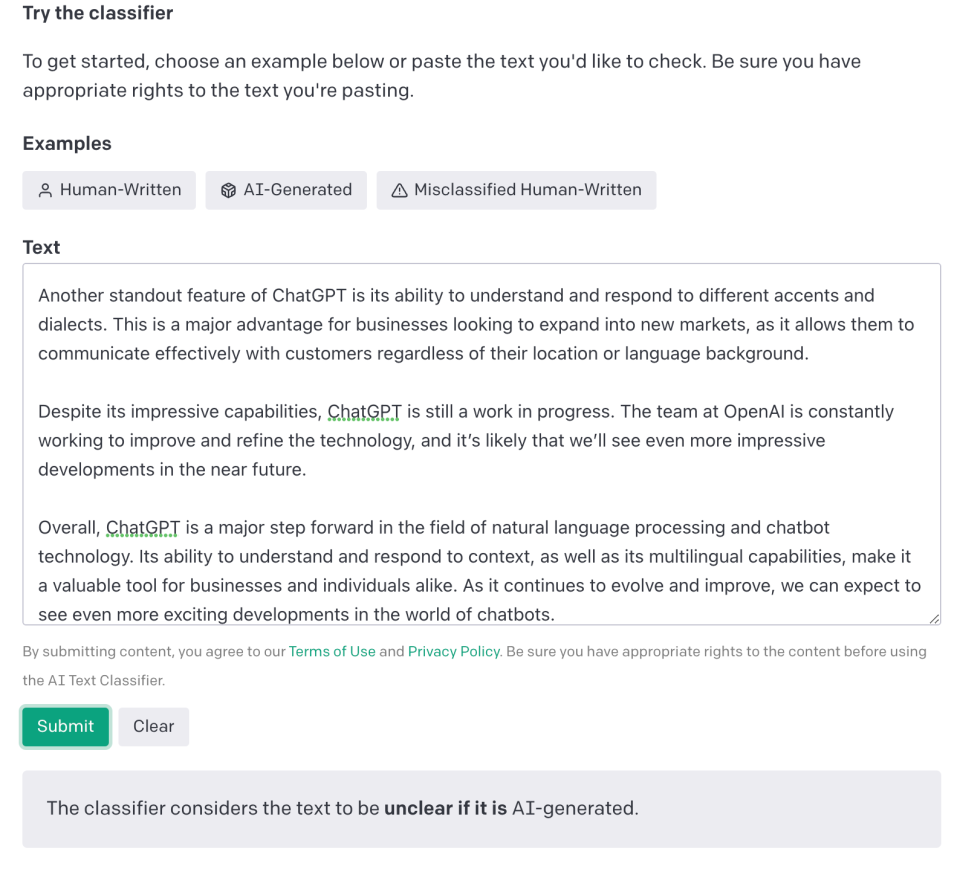

The detector hedges the answer a bit when evaluating whether a given text is AI-generated. “Very unlikely” generated by AI (less than 10% chance), “Unlikely” generated by AI (10% to 45% chance), depending on confidence The text is labeled with something like “Possibility unknown”. Generated by AI (45% to 90% chance), Probably generated by AI (90% to 98% probability), or Likely generated by AI (>98% chance) probability).

Out of curiosity, I put some text into the classifier to see how it processed. I confidently and accurately predicted that a few paragraphs in Meta’s TechCrunch article on Horizon Worlds and a snippet on the OpenAI support page were not generated by AI, but the classifiers were in ChatGPT’s article. I struggled with length text and finally failed to categorize. completely. However, from the Gizmodo article he was able to detect ChatGPT output. — Chat GPT.

According to OpenAI, the classifier incorrectly labels human-written text as AI-written 9% of the time. That mistake didn’t occur in my testing, but I think it’s due to the small sample size.

Image credit: Open AI

At a practical level, we find that classifiers are not particularly useful for evaluating short sentences. 1,000 characters is a difficult threshold to achieve in the realm of messaging, e.g. email (at least the one I receive regularly). And that limitation brings pause — OpenAI emphasizes that classifiers can be circumvented by changing a few words or clauses in the generated text.

That’s not to suggest that classifiers are useless. But the current state of affairs does not stop criminals (or students for that matter).

The question is whether to use other tools. Something of a cottage industry was born to meet the demand for AI-generated text detectors. ChatZero, developed by students at Princeton University, uses criteria such as “perplexity” (text complexity) and “burstyness” (sentence variations) to determine whether text could have been written by an AI. detect. Plagiarism Detector Turnitin is developing his own AI-generated text detector. Beyond those, a Google search turns up at least half a dozen other apps that claim AI-generated wheat can be separated from human-generated chaff, to torture the trope.

It looks like it’s going to be a cat and mouse game. As the AI that generates the text improves, so does the detector. Similar to the exchanges between cybercriminals and security researchers, there are endless exchanges. As OpenAI writes, classifiers may be useful in certain situations, but they are not the only reliable evidence for determining whether a text was generated by AI.

In short, there is no silver bullet to solve the problems posed by AI-generated text. Probably never.

[ad_2]

Source link